Panel interviews: when they actually work, when they don't, and what's replacing them

Panel interviews were designed to reduce bias by triangulating multiple interviewers. In practice they usually amplify the loudest panelist's opinion and produce a worse candidate experience. Here's the version that actually works.

AI summary

- A panel interview puts multiple interviewers in one session with one candidate. The original argument for the format was bias reduction through triangulation. The actual outcome in most rooms is consensus drift toward the most senior or loudest panelist.

- Panels work in two specific situations: highly cross-functional roles where the panelists are evaluating different criteria, and roles where the candidate's interaction style with multiple stakeholders is itself the signal. Outside those two, panels are mostly performative.

- The replacement most teams are converging on is structured async screening (one-way interview answers reviewed independently by each evaluator) plus a smaller live panel of two, not four, for the deciding round. Same evaluation depth, lower scheduling cost, better candidate experience, less consensus drift.

The first panel interview I sat in on as a hiring manager had five interviewers and one candidate. We met for an hour. The candidate fielded questions from a director of engineering, a VP of product, the founder, the head of CX, and me. We came out of the room and the founder said “I liked her.” The other four of us proceeded to agree, with progressively diminishing intensity, that we’d also liked her. We hired her. She left in five months.

The autopsy was easy. The founder had liked the candidate’s calm under pressure, which was real. The rest of us had taken the founder’s read as a calibration anchor and then evaluated everything against her calm-under-pressure ratification. None of us had been asked to evaluate a specific criterion. We’d been asked to “form an impression” in parallel and then defend it. That’s not triangulation. That’s a slightly more expensive version of one person deciding.

The version of the panel interview most teams run is broken in a specific, well-documented way. The version that works is narrow, structured, and rare. This post is the difference between the two.

What a panel interview is supposed to do

The original argument for panel interviews comes out of industrial-organizational psychology. If a single interviewer carries unconscious bias — toward the candidate’s school, accent, presentation style, name — then triangulating multiple interviewers should average the bias out. The wisdom-of-crowds version of recruiting.

The argument depends on a critical assumption: the interviewers form their judgments independently. If their judgments are independent, averaging them improves accuracy. If their judgments are correlated — because they’re sitting in the same room, hearing the same answers, watching the same body language, picking up on each other’s nods and frowns — averaging them doesn’t reduce bias. It encodes the bias of the loudest interviewer into a group consensus that looks more rigorous than it is.

Asch’s classic 1951 conformity studies are the version of this most people know. Solomon Asch put subjects in groups with confederates who gave obviously wrong answers about line lengths; subjects conformed to the wrong answer 36% of the time. The interview-room version of Asch is less dramatic and more common: panelists adjust their assessments in real time as they read each other’s reactions. The “averaging” the panel produces is biased toward the panelist with the most apparent confidence, the most seniority, or the closest relationship to the hiring manager.

This is the part most panel-interview defenses leave out. Multiple interviewers don’t reduce bias when they’re influencing each other’s judgments. They redistribute the bias from the individual to the group, and the group’s bias is harder to detect because everyone agrees.

When panels actually work

Two cases. They’re narrow but real.

Case 1: Cross-functional triangulation with distinct criteria

A platform engineering hire who’ll work daily with product managers, security, and the existing eng team. The engineer evaluates technical depth and architecture thinking. The PM evaluates communication and stakeholder management. The security lead evaluates threat-modeling instincts. Each panelist owns a different criterion that they’re the right person to judge.

In this case the panel works because the interviewers aren’t averaging the same judgment. They’re contributing three separate evidence points to a composite picture. A score of 8/9/4 means something different from 4/4/9, and both are usefully different from 7/7/7. The triangulation is real.

The structural requirement: each panelist has to know what they’re evaluating before the interview starts. Written down. Mapped to a scorecard. If the panel walks in and improvises “I’ll just ask my favorite questions,” the case collapses back into the broken version.

Case 2: Multi-stakeholder behavior as the signal itself

For some roles, how the candidate handles multiple stakeholders simultaneously is the job. A senior account executive talking to a procurement officer, a technical buyer, and a CIO at the same time. A head of marketing presenting to a CEO and a CFO with different priorities. A customer success leader managing a steering committee.

For these roles, watching the candidate triangulate the room is the test. The panel format isn’t a triangulation method — it’s a simulation of the actual work. The signal is in how the candidate adjusts their answers, redirects when stakeholders interrupt, and finds the answer that satisfies multiple priorities at once. A solo interview can’t generate that signal because there’s nobody to manage.

This case is rarer than the first one. Most roles don’t actually require multi-stakeholder real-time management as a core competency. Teams over-apply the simulation logic to roles where it doesn’t fit.

When panels are mostly performative

Most of the time.

The classic version: a 4-5 person panel where everyone is evaluating a vague “fit” criterion, no panelist owns a specific competency, the questions are improvised, and the debrief is a group discussion that quickly converges on whatever the most senior person in the room thought. The candidate spends an hour in a high-pressure social performance. The team spends an hour producing a single anchored consensus they could have produced with one good interviewer at 1/5 the headcount cost.

The reasons this format persists are mostly social and political:

- “We want everyone to have a chance to meet the candidate.” This treats the interview as a stakeholder-buy-in exercise, not a signal-gathering one. If the goal is buy-in, fine — but then call it that and don’t expect bias reduction as a side effect.

- “We want consensus.” Consensus before someone joins the team is overrated as a hiring signal. Most great hires have at least one panelist who was lukewarm.

- “It’s faster than running 4 separate interviews.” True, and the reason most teams do it. But the bias cost of doing four interviews in parallel inside one room is higher than the calendar cost of four sequential interviews of 30 minutes each — if you actually want the four interviewers’ independent judgments.

The honest version is that panel interviews are usually a scheduling shortcut dressed up as a methodology improvement. The methodology improvement was the theory. The scheduling shortcut is the practice.

How candidates experience panels (and why it matters)

The candidate side of the panel format has been documented at length and ignored by most hiring teams. A 5-person panel is interpersonally exhausting. The candidate is reading five faces, adjusting tone for five sets of cues, fielding questions in five different styles, and watching for whose approval matters most. Most candidates’ performance degrades over 45-60 minutes of this in ways that have nothing to do with their actual qualifications.

The candidates who do well in panels tend to share traits: high social fluency, comfort in performative settings, fast verbal processing, and the kind of polish that comes from having sat in a lot of panels before. Those traits correlate with prior elite environments and confidence patterns that overlap heavily with the biases panel interviews were supposed to filter out. The candidates who do poorly in panels are often the ones the role actually needs — careful thinkers, deep technical operators, candidates from non-traditional backgrounds who haven’t logged panel reps.

In a competitive market, top candidates also turn down panels they perceive as gauntlets. Multiple recent recruiting surveys put 4+ interview loops as a top reason candidates withdraw from processes. Adding a 5-person panel to the loop loses you the candidates who have other options.

What’s replacing the broken version

The replacement most teams are converging on isn’t “no panels.” It’s a two-step decomposition of what the panel was trying to do.

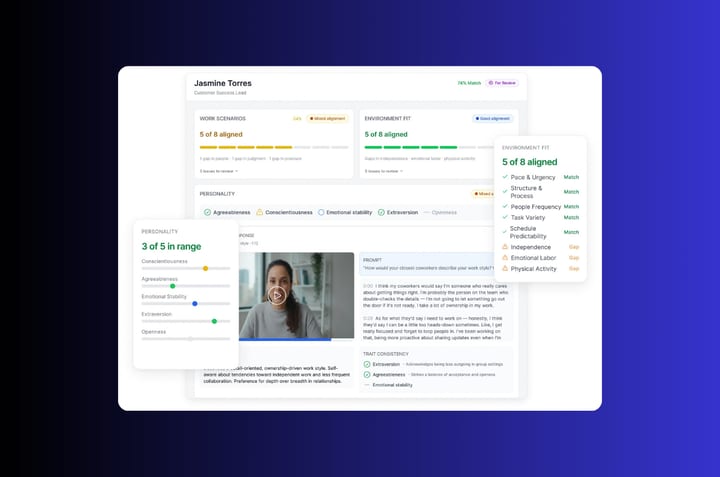

Step 1: structured async screening. The candidate answers a small set of one-way interview questions recorded asynchronously. Each evaluator on the team reviews the recordings independently — at their own time, without watching each other’s reactions — and submits a scorecard before any group discussion. This produces genuine independent judgments that can actually be averaged. Truffle is built for this layer specifically; AI Match ranks the candidates by criterion fit and each evaluator’s score lands in the same record, ready for a structured debrief.

The async screen does the triangulation work the panel was supposed to do, without the consensus-drift problem. It also takes 20-30 minutes of evaluator time spread across the week instead of an hour of synchronous time from five people on the same calendar.

Step 2: a small live panel of two, for the deciding round only. Not for screening. Not for cross-functional buy-in. For the actual decision. The hiring manager plus one peer of the future hire, running a structured live interview against a specific scorecard, both having reviewed the async screening evidence before they walked in. The candidate gets a focused 45-minute conversation instead of a 60-minute social performance. The team gets two genuinely independent live judgments to add to the async ones.

This collapses what used to be three rounds (recruiter screen, panel, final) into two rounds (async screen, live two-person panel) for most roles. The total evaluator time drops. The candidate experience improves. The signal density per minute of interview time goes up. And the bias-reduction theory that panels were supposed to deliver — independent judgments triangulating a fair view of the candidate — actually shows up in the data because the async layer makes the independence real.

What this means for your process

If you currently run a 4-5 person panel and aren’t sure whether you should: ask one question. Is each panelist evaluating a different criterion that they’re uniquely qualified to assess?

- If yes (and you can name the four criteria in one sentence each), the panel can work. Make sure each panelist has a written criterion, a question set, and a private scorecard they submit before the debrief.

- If no (and the four panelists are mostly evaluating “fit” or “did I like them”), the panel is producing an anchored consensus dressed up as triangulation. Replace it with an async screening layer plus a live panel of two on the deciding round.

The version of panel interviews most companies run is a holdover from when async screening tools didn’t exist and getting multiple judgments meant putting people in the same room. The async layer is now table-stakes. The panel format is overdue for the same upgrade.

Frequently asked questions about panel interviews

What is a panel interview?

A panel interview is a single interview session where multiple interviewers — usually three to five — evaluate one candidate together. It’s commonly used for cross-functional roles, senior hires, and situations where multiple stakeholders want a vote in the decision. The intended benefit is bias reduction through triangulation of independent judgments. The actual effect in most rooms is consensus drift toward the most senior interviewer’s read, because the panelists are not actually making independent judgments — they’re influencing each other’s assessments in real time.

Are panel interviews effective?

Panels are effective in two specific cases. The first is cross-functional triangulation where each panelist is the right person to evaluate a different criterion (an engineer evaluating technical depth, a PM evaluating communication, a security lead evaluating threat modeling). The second is roles where the candidate’s behavior with multiple stakeholders is itself the signal being tested. Outside those two cases, research on group dynamics — going back to Asch’s 1951 conformity studies — suggests panels amplify the loudest panelist’s opinion rather than averaging out individual bias.

How many people should be on a panel interview?

Two to three is the operational sweet spot. Beyond three, the consensus-drift problem outweighs the triangulation benefit, scheduling costs compound, and the candidate’s interpersonal load degrades their performance in ways unrelated to actual fit. Anything past four is usually performative — a way to signal that many stakeholders met the candidate without actually adding evaluative signal.

What’s the difference between a panel interview and a group interview?

A panel interview is many interviewers evaluating one candidate. A group interview is one interviewer (or a small panel) evaluating many candidates in the same session, often used for high-volume hourly hiring or assessment-center formats. The two formats get confused because both involve more than two people in the room, but they answer different questions. Panels test how a candidate handles cross-functional stakeholders. Group interviews test how candidates compare against each other in real time.

How do you run a good panel interview?

Cap the panel at two or three people. Assign each panelist a different criterion to evaluate, written down before the interview starts and mapped to a scorecard. Have each panelist submit their independent score to the scorecard before the debrief, not after. Run the debrief from the scorecards, not from impressions. Keep the live panel session for the deciding round only — earlier rounds run faster, cleaner, and with better independence as async screening interviews each evaluator reviews privately.